|

3/5/2023 0 Comments Duplicate scanner windows

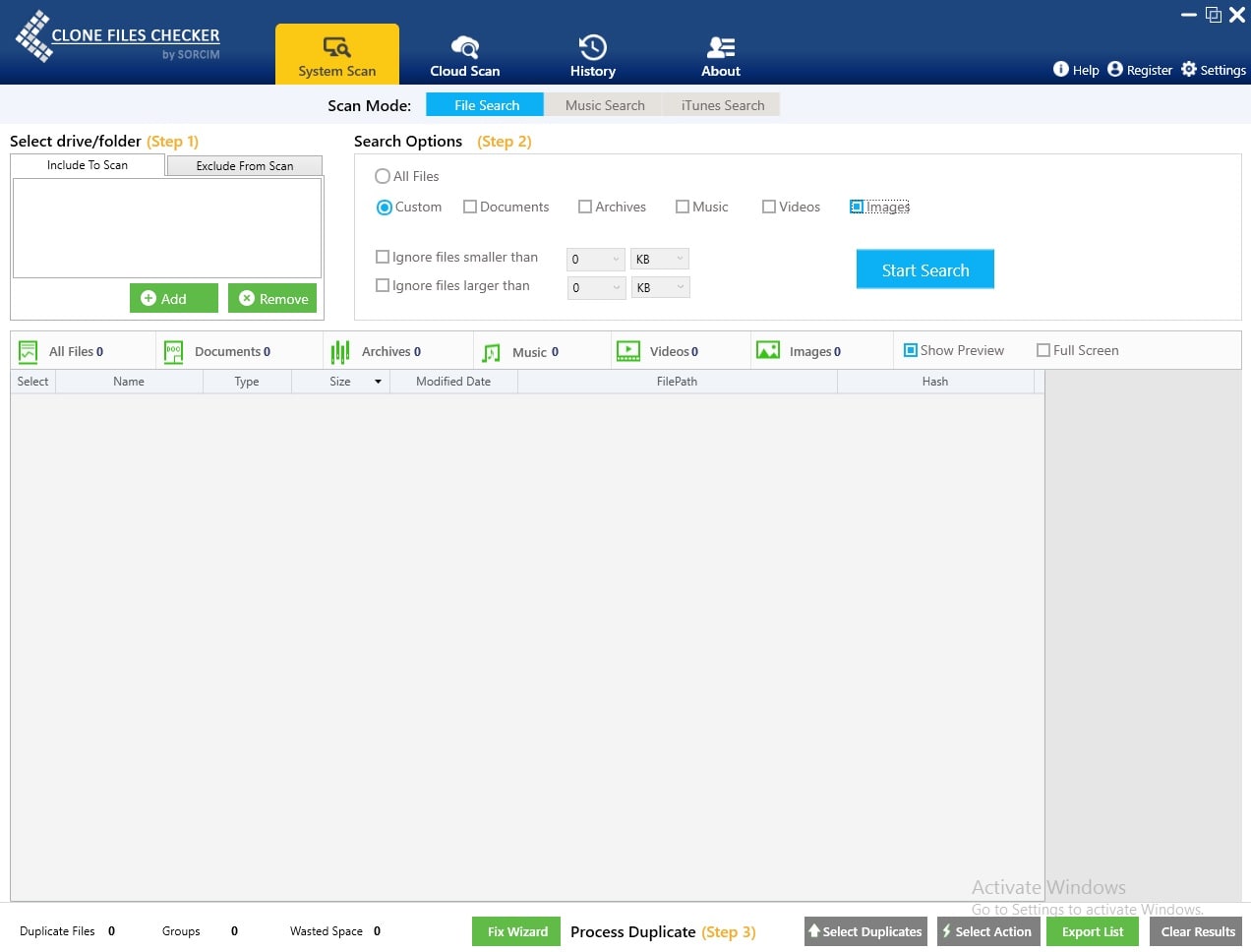

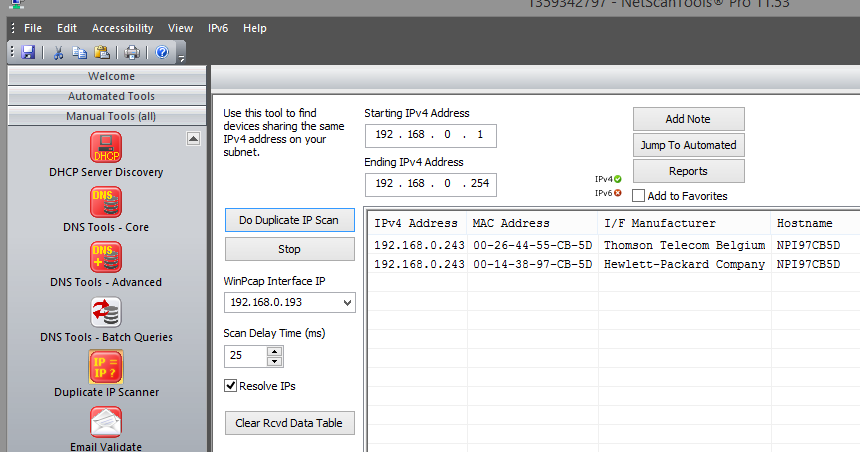

It is compatible with Windows 10, 8, 7, Vista, and XP. Unless, of course, you are trying to get rid of system-protected files.Īs noted, the software is lightweight and is free to use. More than that, it does not require administrator privileges to delete duplicates. Exact Duplicate Finder conducts a byte-by-byte analysis to ensure that all displayed files are indeed exact copies of other files. This allows you to view the files and quickly remove all duplicates. It might prove easier though for some to use a windows based front end, even though it might be slower since it is over the LAN.Once the scanning is complete, the app will then display file groups and locations in a multi-panel layout. Same basic algorithm I use in the series of linux commands in my prior post. I was able to scan 3TB worth of data to identify over 40GB of duplicate files is just a couple of hours, comparing shares over a gigabit network and local hard drives. Additional caching of the contents of the files additionally improves performance. Because of this the results are determined much faster than in programs which use hashing algorithms, for which all files have to be read completely. If two files are not equal from a given point on, reading is interrupted no more has to be read for determining that these files are not equal. Then the files are compared with each other, and thus the equal files are determined.

It might choke on some special characters in file names, but hopefully, those will not be the dupes.įirst, all files are sorted by their size, because files can be only equal, if they have the same size (logically). This is not perfect, but it will get the job done in most cases. The path to be searched in the second command must be changed to the name of your user-share. The line that calculates full md5 checksums for your remaining candidate files will take a long time, so do not expect an answer in seconds unless you only have a few files. (It will wrap on the monitor if it gets to the end of a line, just keep typing.) Sort /mnt/disk1/dupes_tmp5 | uniq -w32 -d -all-repeated=separate | cut -c35- >/mnt/disk1/dupes_out.txtĮach of the lines in bold above should be typed as a single line with no carriage returns. They are in in the file /mnt/disk1/dupes_out.txt Last, sort the md5 checksums getting rid of the unique files, pairing up the remaining. Now get the full md5 checksum for the files left as candidatesĬat /mnt/disk1/dupes_tmp4 | xargs md5sum >/mnt/disk1/dupes_tmp5 Those we'll look at with a full md5 checksum. No need to check the remainder as it is not a duplicate. If the file is unique in the first 4Meg of its contents, it is unique. Then, for all that are not unique in their size, get the md5sum of the first 4Meg of the file. (make sure you change "your_share" in the next line to the correct name)įind "/mnt/user/your_share" ! -empty -type f -links 1 -printf "%s " -exec ls -dQ ' | cut -d" " -f1,3- | uniq -D -w 15 | cut -d" " -f2- >/mnt/disk1/dupes_tmp2 Next, find and list with their sizes files that are not empty. You would also want to stop cache_dirs if you have it running. The final output is a text file containing the duplicate filesįirst, to make the system less likely to crash since you will be using a lot of RAM, make it less likely to horde directory entries in memory.

I would do it entirely on the server, since it a highly IO intensive task. Most of my array is indexed and orderly, but I do have a couple of areas that are more challenging, and the dupe checker isn't over-the-network friendly. I'm looking for something that can scan selective shares on my array for duplicates based on file checksum.Īnyone have any experience with anything that would handle that?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed